How to build Generative QA Systems with OpenAI and Pinecone

Updating the Stateset Response AI Shopify App listing to leverage OpenAI embeddings and Pinecone’s vector database.

Transform text into numerical representation in vector space.

The pattern is the following:

Create Embedding

Query Vector

Completions API

I am implementing this pattern using node.js serverless functions.

The idea is we need to create a context-enhanced query to be used in the prompt based on the response from the vector database. In order to do this we will create 3 functions.

Upsert, Retrieve and Update

The Upsert function enable us to generate an embedding, save it with the text in the metadata and vector array into Pinecone.

The Retrieve functions allows us to pass query the vector database with the embedding based on the question, return back the text from the metadata as a context to be used in the completions API.

The Update function enable us to generate an embedding, search for the corresponding vector Id in Pinecone, and update it with the text in the metadata and vector array into Pinecone.

I am using the chat functionality to to call /add, /answer and /update commands.

/add calls the Upsert API

/answer calls the Retrieve API

/update calls the Update API

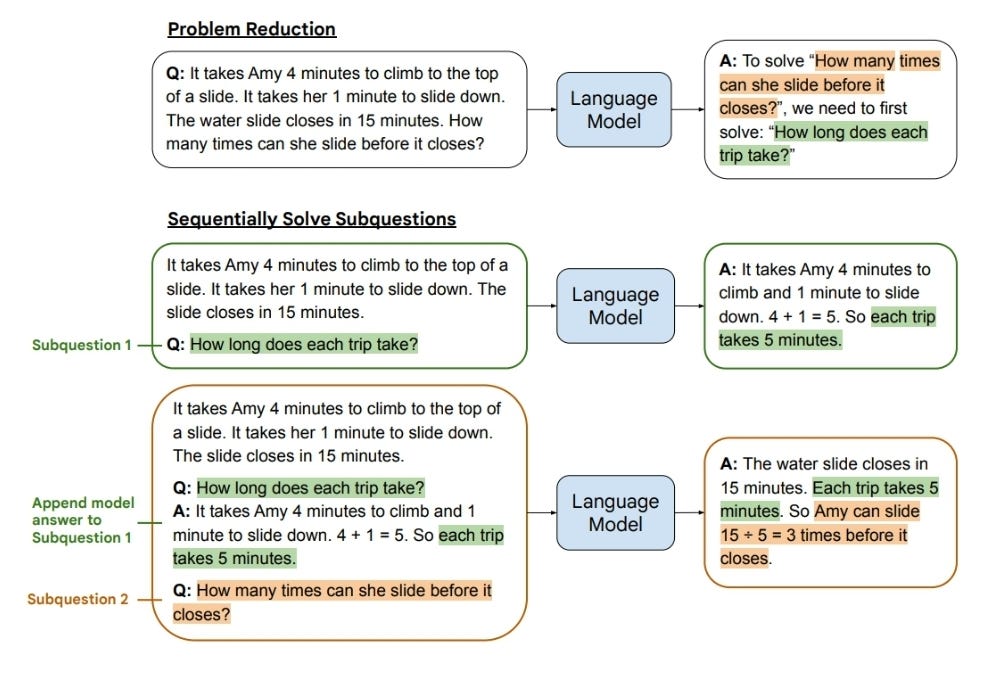

Chain of Thought can also be used when adding to the context:

This diagram made it click / “ah-hah” moment. Basically you set the answer semantics in the prompt and then ask the question.

Vector DB for saving answers and using that in chain of thought prompting works great.

Least to Most improves answer accuracy even more:

// Upsert Data

upsertData = async (client) => {

const res = await fetch('/api/ai/embeddings/upsert', {

method: 'POST',

headers:

{

'Content-Type': 'application/json'

},

body: JSON.stringify({

"text": this.state.body,

"user": this.state.user_id

})

});

const gpt_answer_text = await res.json()

this.handleResponse(res.status, 'Updated Vector Embeddings', client.mutate, false)

};

// Generate Answer

generateAnswer = async (client) => {

const res = await fetch('/api/ai/embeddings/retrieve', {

method: 'POST',

headers:

{

'Content-Type': 'application/json'

},

body: JSON.stringify({

"question": this.state.body,

"user": this.state.user_id

})

});

const gpt_text = await res.json()

this.handleResponse(res.status, gpt_text.choices[0].text.toString(), client.mutate, false)

};Here are the three APIs where the magic happens:

upsert.js

// upsert.js

export default withAuth(async (req, res) => {

if (req.auth.sessionId) {

let limit = 3750;

var text_input = req.body.text;

var user = req.body.user;

var raw = JSON.stringify({ "input": text_input, "model": "text-embedding-ada-002", "user": user });

// OpenAI Request Options

var requestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': process.env.OPEN_AI

},

body: raw,

redirect: 'follow'

};

var vectors_embeddings;

// Make Callout to OpenAI to get Embeddings

console.log('Creating Embedding...');

// Make Callout to OpenAI

let embedding_response = await fetch("https://api.openai.com/v1/embeddings", requestOptions)

.then(response => response.json())

.then(json => {

vectors_embeddings = json.data[0].embedding;

})

.catch(error => {

console.error(error);

res.status(500).send('An error occurred');

})

var vectors_object = { id: uuid(), values: vectors_embeddings, metadata: { text: text_input, user: user } };

console.log(vectors_object);

var raw = JSON.stringify({"vectors": vectors_object, namespace: 'customer_support' });

// Pinecone Upsert Request Options

var requestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Host': process.env.PINECONE_INDEX_NAME,

'Content-Length': 60,

'Api-Key': process.env.PINECONE

},

body: raw,

redirect: 'follow'

};

// Make Callout to Pinecone

// Pinecone Upsert

console.log('Upserting Pinecone...');

let pinecone_query_response = await fetch(`https://${process.env.PINECONE_INDEX_NAME}/vectors/upsert`, requestOptions)

.then(response => response.text())

.then(json => {

console.log(json);

return res.status(200).send({'status': 'updated_pinecone'});

})

.catch(error => {

console.error(error);

return res.status(500).send('An error occurred');

});

} else {

res.status(401).json({ id: null });

}

});

retrieve.js

export default withAuth(async (req, res) => {

if (req.auth.sessionId) {

let limit = 3750;

var text = req.body.question;

var user = req.body.user;

var raw = JSON.stringify({ "input": text, "model": "text-embedding-ada-002", "user": user });

var requestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': process.env.OPEN_AI

},

body: raw,

redirect: 'follow'

};

var xq;

console.log('Creating Embedding...');

// Make Callout to OpenAI

let embedding_response = await fetch("https://api.openai.com/v1/embeddings", requestOptions)

.then(response => response.json())

.then(json => {

console.log(json);

xq = json.data[0].embedding;

})

.catch(error => {

console.error(error);

res.status(500).send('An error occurred');

})

console.log('Embedding Created');

console.log('Embedding:', xq);

var raw = JSON.stringify({ "namespace": "customer_support", "vector": xq, "includeValues": false, "includeMetadata": true, "topK": 3 });

var requestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Host': process.env.PINECONE_INDEX_NAME,

'Content-Length': 60,

'Api-Key': process.env.PINECONE

},

body: raw,

redirect: 'follow'

};

// Make Callout to Pinecone

var contexts;

console.log('Querying Pinecone...');

let pinecone_query_response = await fetch(`https://${process.env.PINECONE_INDEX_NAME}/query`, requestOptions)

.then(response => response.json())

.then(json => {

console.log(json);

console.log(json.matches[0].metadata);

console.log(json.matches[0].metadata.text);

contexts = json.matches.map(x => x.metadata.text);

})

.catch(error => {

console.error(error);

return res.status(500).send('An error occurred');

})

// Create Prompt for OpenAI

async function createContextQuery(contexts) {

let prompt;

// build our prompt with the retrieved contexts included

let prompt_start = (

"Answer the question based on the context below.\n\n" +

"Context:\n"

);

let prompt_end = (

`\n\nQuestion: ${text}\nAnswer:`

);

// append contexts until hitting limit

for (let i = 1; i < contexts.length; i++) {

if (contexts.slice(0, i).join("\n\n---\n\n").length >= limit) {

prompt = (

prompt_start +

contexts.slice(0, i - 1).join("\n\n---\n\n") +

prompt_end

);

break;

} else if (i == contexts.length - 1) {

prompt = (

prompt_start +

contexts.join("\n\n---\n\n") +

prompt_end

);

}

};

return prompt;

}

console.log('Creating Prompt...');

var CONTEXT_PROMPT = await createContextQuery(contexts);

console.log('Creating Completion...');

console.log(CONTEXT_PROMPT);

var raw = JSON.stringify({ "prompt": CONTEXT_PROMPT, "max_tokens": 150, "temperature": 0, "top_p": 1, "frequency_penalty": 0, "presence_penalty": 0 });

var requestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': process.env.OPEN_AI

},

body: raw,

redirect: 'follow'

};

var completion = await fetch("https://api.openai.com/v1/engines/text-davinci-003/completions", requestOptions)

.then(response => response.json())

.then(json => {

console.log(json);

return res.status(200).json(json);

})

.catch(error => {

console.error(error);

return res.status(500).send('An error occurred');

})

} else {

res.status(401).json({ id: null });

}

});

Lastly we have update.js

try {

var raw = JSON.stringify({ "input": text, "model": "text-embedding-ada-002", "user": user_id });

var requestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': process.env.OPEN_AI

},

body: raw,

redirect: 'follow'

};

var xq;

console.log('Creating Embedding...');

// Make Callout to OpenAI

let embedding_response = await fetch("https://api.openai.com/v1/embeddings", requestOptions)

.then(response => response.json())

.then(json => {

console.log(json);

xq = json.data[0].embedding;

})

.catch(error => {

console.error(error);

res.status(500).send('An error occurred');

})

console.log('Embedding Created');

console.log('Embedding:', xq);

var raw = JSON.stringify({ "namespace": pinecone_namespace, "vector": xq, "includeValues": false, "includeMetadata": true, "topK": 3 });

// Pinecone Upsert Request Options

var pineconeRequestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Host': pinecone_index,

'Content-Length': 60,

'Api-Key': pinecone_api_key

},

body: raw,

redirect: 'follow'

};

console.log('Querying Pinecone...');

let id;

let pinecone_query_response = await fetch(`https://${pinecone_index}/query`, pineconeRequestOptions)

.then(response => response.json())

.then(json => {

console.log(json);

id = json.matches[0].id;

})

.catch(error => {

console.error(error);

return res.status(500).send('An error occurred');

})

var raw = JSON.stringify({ id: id, "values": vectors_embeddings, setMetadata: { "text": text, "user": user_id }, "namespace": pinecone_namespace });

// Pinecone Upsert Request Options

var updatePineconeRequestOptions = {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Host': pinecone_index,

'Content-Length': 60,

'Api-Key': pinecone_api_key

},

body: raw,

redirect: 'follow'

};

// Make Callout to Pinecone

// Pinecone Upsert

console.log('Upserting Pinecone...');

let pinecone_update_response = await fetch(`https://${pinecone_index}/vectors/update`, updatePineconeRequestOptions)

.then(response => response.text())

.then(json => {

console.log(json);

return res.status(200).send({ 'status': 'updated_pinecone_vector' });

})

.catch(error => {

console.error(error);

});

} catch (error) {

console.error(error);

}

}

export default createUpdate;

This is a powerful combination. Here is an example from https://chat.response.cx:

For more information on how to build generative QA with vector embeddings feel free to reach out: dom@stateset.io